Your calendar looks the same as it did a year ago. The same standups, the same one-on-ones, roughly the same number of meetings with roughly the same amount of stuff said out loud. But far more work is getting done in the gaps between those meetings, and more work getting done means more decisions getting made — which retry strategy, which data model, which library to standardize on, which tradeoff to accept and which to flag. The output went up, but the communication didn’t.

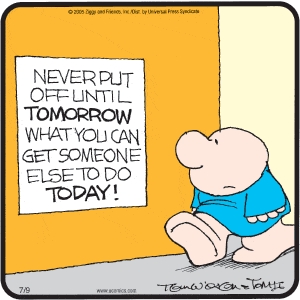

That means you are delegating more than you decided to. Not because you chose to, but because the work stopped waiting for you.

We used to communicate a lot and decide a little; now we decide a lot and communicate about the same amount, or less. The decisions didn’t stop happening. They stopped surfacing on the way to happening, or making it back to you afterward.

AI made delegation cheap and decision visibility expensive. More people can now turn intent into output without waiting for the old checkpoints. But the organization still has to understand which decisions were made, why they were made, and what now depends on them. The examples above are engineering examples because software engineering provides the clearest data points. But this structural shift applies to anyone turning intent into output with AI. Solutions, Product Management, Finance, Marketing — this is happening in all departments. Anyone building something, from a spreadsheet to software is making these calls now, faster than the org around them can register that a call was even made.

Think about what used to surface decisions. When execution was slow, people ran into walls constantly. When someone got stuck or something took longer than people were expecting, you talked about it. The act of getting stuck forced some communication; a conversation with the manager, a project meeting, an RFC, something that created alignment and contained an explanation. The slowness was annoying. But it had a clear purpose, even if that purpose was implicitly realized: it queued decisions where leaders could see them and be informed. The bottleneck was where you caught things.

That queue is mostly gone. The barriers that used to take days or weeks to clear, get cleared in an afternoon. The decision that would have waited for a one-on-one gets resolved before anyone meets. The regular meetings are all still on the calendar. They’ve just stopped being where discussion and informing happens. You and your people are still accountable for the call; it’s just that the information doesn’t get back to everyone in the room.

So here’s what’s actually happening, because most people feel what’s going on without having the words for it: scope quietly inflated at every level all at once. It did for the person above you. And for the person below you. Nobody held a meeting about it. And yet, each person’s reach got wider, which made each manager’s surface area wider, which made the layer above them’s reach wider still. The number of decisions made at each of those levels inflated to match. And those decisions are getting made wherever the work is happening.

And now everyone’s work reaches further than it used to. AI lets people stretch deep into other people’s territory — other roles, and even other disciplines — by appearing to cover the knowledge gap that used to prevent, or at least deter, that overreach. And that reach grew faster than anyone’s judgment about when to use it.

This is a structural problem, not a stylistic one, and you don’t get to opt out of it. The only thing you get to decide is whether those decisions are made with enough context to be good decisions, and whether anyone can see them after the fact.

Why Decisions Stay Quiet

A decision made below your line of sight has two ways to reach you. Someone tells you, or you find out later the hard way, usually when something breaks. One is a sentence in a standup; the other is a postmortem. And which one you get is almost entirely a question of whether the person who made the call felt it was their job to surface the issue.

When a builder goes quiet, they’re not usually blocked by technology. They’re blocked on a question they can’t or don’t want to articulate: is this mine to decide? Sometimes it’s “I’m not sure I’m allowed to make this decision.” Sometimes it’s “I don’t want to be the one responsible for it.” Sometimes it’s “I don’t have enough context to keep going and I’m not sure where to find that context.” Those are three different problems that are usually wearing the same outfit — “AI can’t do this” — and none of them is actually about what AI can or can’t do.

There’s a fourth version that doesn’t quite look like someone getting stuck. It’s that the individual actively decides not to act. They stop short because finishing the work would mean stepping into another team’s territory. They tell themselves the polite thing to do is leave it for whoever normally owns it. Or maybe that’s just more responsibility than they want to take on.

Any way you slice it, that’s still a decision. It just looks like deference.

The person made a call about scope and ownership and didn’t name it as such, so it never reaches you either. Not surfacing a decision and quietly deciding not to act are the same problem from your side of the table: a choice got made below your line of sight and you have no idea that it happened.

What they do have in common is that the person stopped for some reason and probably didn’t surface “the real” why. That why is the signal you need most, because it’s where you make the operational investment that empowers people: here is where the work is actually hard, which is the same as saying here is where we should invest, so the next person doesn’t get stuck in the same place.

But that signal needs at least two things to survive. One, the person has to notice they made a call at all — that the quiet workaround, the abandoned task, the thing the model suggested, was a decision and not just “the work.” And two, they have to feel it’s their concern to raise. That combination of noticing your own decisions and treating them as worth surfacing, is most of what agency actually is. And it’s what separates a team that merely ships from one that stays aligned while it ships. A group of capable people each making good private calls isn’t a team; it’s a set of parallel tracks that quietly drift apart. Surfacing is what keeps them converging and keeps people aligned.

The Invisible Delegation Cascade

Most people sense this layer is there. Fewer have come to terms with how far down it goes, or what it actually costs.

It isn’t only managers who are delegating more without noticing. The builders are doing it too. They are delegating their decisions to the model. Someone working with an AI accepts a retry policy, a schema choice, a new library, and a hundred small structural calls the model proposed and the builder waved through. Each one is a decision that used to be part of the process of writing code. Most never get registered as decisions. They get registered as “the code (A-)I wrote today.” The human is in the loop, technically. But the loop is moving fast enough that a lot of what passes through it doesn’t get the requisite level of scrutiny. It just gets accepted.

The human in the loop has become a crossing guard, waving everyone through without ever looking at the traffic.

Murphy Trueman made a version of this point about design systems: hand an agent a system with undocumented gaps and it fills them with the internet’s average, silently, returning a guess that looks identical to the parts it got right. The same thing happens to decisions, one level up. Murphy is representing the gap in the design and the code. I’m agreeing and furthering it org-wide. But the mechanism is identical: a silent fill that looks exactly like a choice someone made on purpose.

So the delegation is happening at every layer at once. The org delegated more to the manager; the manager delegated more to the engineer; the engineer delegated more to the model. And at each hop, decisions get made and not all are surfaced. By the time anything reaches you as the leader, you’re not looking at the decision anymore. You’re looking at the downstream impact of decisions nobody quite remembers making, with no clear line back to the calls that produced them. Including, sometimes, the person or model who made them.

I’m not suggesting you claw it all back. You can’t, nor should you want to. Pushing decisions down to the people closest to the work is the right move. It was also the right move before AI made it unavoidable and becoming more and more the norm. The problem isn’t that the decisions moved. It’s that now they’re moving invisibly. A decision you can see is one you can revisit when it turns out to be problematic. A decision that dissolved into “the code (A-)I wrote today” is one you find three months later, load-bearing, with everyone already building on top of it like it was decided with intention.

Infrastructure, Not Hygiene

The obvious objection is that, if every retry policy and schema choice is a decision, surfacing all of them would bury you. Nobody can ingest that amount of information. You’re right to call that out, and that’s exactly the point I’m making. The goal was never to see every decision. It’s to make sure the ones that matter are yours to make, and that the rest stay reconstructable after the fact — so the load-bearing call you’d otherwise never have noticed is at least findable when it starts holding weight.

That’s the bar: not visibility into everything, just traceability for the things that come to matter. Which means the move isn’t control — control never scaled to this volume and it never will — and it isn’t capturing every keystroke either. The goal is legibility: the decisions that matter need to be visible as they happen, or at least traceable once they do.

The unglamorous mechanism for that is documentation. But notice that it now has to run in two directions, not one.

The familiar direction is outward and upward: the person records what they decided and why, so a manager, an architect, or another team can see the call without reconstructing it from the code. That was always good practice. It’s load-bearing now in a way it wasn’t before. The meetings used to carry this. Then getting-stuck would force the conversation. But AI-assisted building meant that the discussions don’t happen when AI fills in those gaps. Now writing it down is the only channel left that the new pace didn’t break.

Now to the unfamiliar direction. Good documentation practice is also what lets the model tell the builder what it decided on their behalf. AI is great at creating lots of documentation that most humans never read. The structural calls that got waved through only become inspectable if the work captures them. Done well, the builder can go back and interrogate the decisions that were technically theirs and functionally the model’s. Done poorly, there’s nothing to go back to besides the code and a prayer.

That’s the whole shift in one line: documentation is now a strategic investment, not just bureaucratic hygiene. Documentation used to be how you reported a decision after you made it. Now it’s how a decision becomes visible to anyone at all — including the person who supposedly made it.

None of this requires a new initiative. It doesn’t require redrawing the org, though some companies are doing exactly that — Coinbase’s CEO has made the case for it, which I think reaches for a structural fix to a problem that should be a day-to-day operational fix.

It requires only that you accept what’s already true: more is being decided, by more people and more tools, further from your line of sight than at any point in your career. You need to treat those decisions as something worth being able to see.

That starts with making it safe to surface a stuck point. And “safe” isn’t a policy — it’s something that’s decided every time someone brings you a half-made decision they likely already shipped. Your reaction either tells them surfacing these things is welcome or it teaches them to keep the next one to themselves.

Documenting context and surfacing stuck points are easy habits to discard when raw velocity looks high. They aren’t structurally difficult; they are just easy to skip when everyone’s busy — which is exactly when and why most teams skip them. But those choices are being made either way — by your people, by the models they work with, and it’s happening at a pace the old calendar can no longer catch. The only thing you actually get to decide is whether you want to see them.