Unless you’re an AI vendor, you don’t need an AI strategy. You need an AI adoption strategy. This distinction matters. “AI strategy” implies a comprehensive vision for how AI transforms your business—a roadmap, a framework, maybe even a consultant or two. That’s the right frame if you’re selling AI products or reimagining your SaaS business through an AI lens. But if you’re like most CTOs, AI is just another tool. A powerful one, but still a tool. And tools only matter if people actually use them.

If you get the change management and adoption strategy wrong, the costs compound. When AI initiatives fizzle, the conclusion is usually “AI isn’t ready for us” or “our team isn’t there yet.” This is a strategic misread based on a failed rollout, not a failed technology. Worse, every failed initiative makes the next one harder. People learn that AI is another ivory tower corporate priority to be waited out. Meanwhile, your best people, the ones who want to use modern tools and techniques, watch peers at other companies actually ship things. That gap is visible, and it’s frustrating enough to make them leave.

The real question isn’t “what’s our AI strategy?” It’s “how do we get people using AI effectively?”

Training Doesn’t Change Behavior

Nearly every board is coming in hot looking for AI adoption. Checking the box on usage typically starts with training. It feels strategic. You can measure completion rates, track certifications, and report that 87% of your technology organization has completed AI fundamentals and Safety. Box checked.

But training creates awareness, not motivation. You can teach someone how a tool works without making them want to use it. And in my experience, most people who complete AI training go right back to doing things the way they’ve always done them. The path to value isn’t clear to them. The adoption problem isn’t a lack of knowledge anymore, it’s a lack of desire. They’ll complete the modules, pass the quiz, and never use the tools again.

Adoption Happens for Personal Reasons

Good leadership isn’t about forcing compliance. It’s about getting people to do what the business needs them to do for their own reasons. This isn’t manipulation, it’s alignment. Mandates work when people already believe in the thing; you’re just formalizing what they’d do anyway. But when people don’t see the value, no amount of pressure changes that. They do exactly what’s required, usually the bare minimum, and nothing more.

This is where most AI initiatives fail. They’re structured around what the organization wants, efficiency, innovation, competitive advantage, rather than what individuals want: less tedious work, solving problems that annoy them, or looking good in front of their peers.

When someone discovers that AI can eliminate or minimize a task they hate, they don’t need a mandate. When they realize they can prototype something in an afternoon that would have taken a week, they’re not waiting for permission. The adoption happens because it serves them. Your job as a leader is to create the conditions where people make that discovery for themselves.

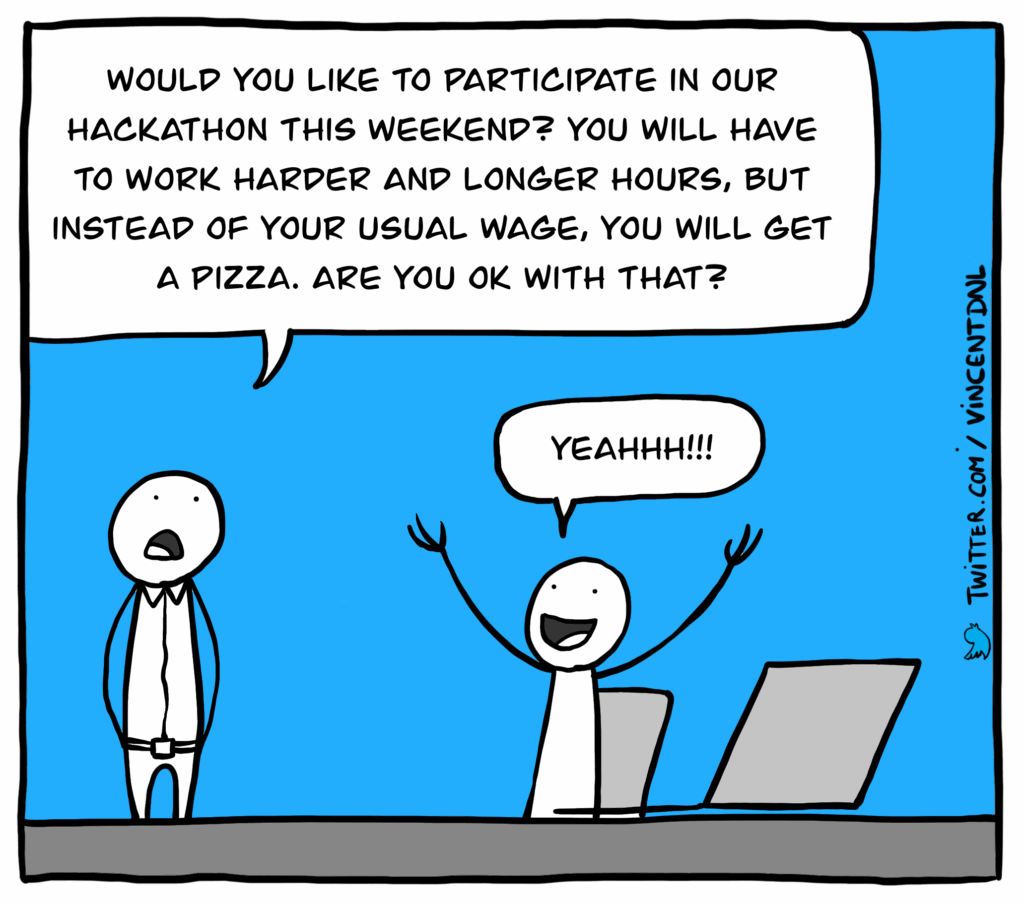

Why Hackathons Work

A hackathon forces experimentation while preserving autonomy. I use a similar approach when teaching jiujitsu: constraint-based training. The idea is simple, you remove someone’s default options to force them to develop a new skill. If a student always escapes using strength by pushing someone off, you have them spar with the constraint of not being allowed to use their arm(s). They can’t rely on their usual approach, so they’re forced to find another way.

Here is the hackathon version of this:

- The Challenge: Do something work-related that solves a problem for yourself, your team, or the company

- The Constraint: You have to use AI

That combination of instructions is important. The constraint removes the activation energy problem: People who might never try AI on their own will try it when it’s the explicit point of the exercise. The freedom ensures they’re working on something relevant, something impactful, or something that they actually care about.

You’re not teaching AI (though it’s smart to do some teaching to kickstart the event for people who need it). You’re letting people solve their own problems with it. And when someone solves a problem they care about using a tool they’d never tried, they don’t need convincing anymore. They have experienced it for themselves.

People know their own problems and daily friction better than anyone else in the business. Give them time, space, and tools to solve those problems in a better way, and a forum to show it off, and you’re helping them help themselves.

Two examples from our most recent hackathon:

- Someone from our solutions team, technical but not an Engineer, built a browser-based audience profiling tool to demo our platform’s capabilities during sales calls. When he showed it, the analytics team’s reaction was immediate: “This would actually be easy to build into the real product.” It went from hackathon demo to roadmap item to shipped feature in a single sprint. He could explain what he wanted clearly enough that AI built something visual and functional, and have it be something engineering could look at and translate into production code quickly.

- A front-end team was frustrated with how difficult it was to navigate between our internal products. They’d wanted something like the Mac Spotlight search pattern that’s become standard in operating systems. During the hackathon, they built it — not a prototype, but something immediately deployable. All that remained was polish and edge cases. This feature wasn’t anywhere near the front of the roadmap. It shipped a sprint later anyway because they could build it in a day.

Both of these examples came from people somewhere between latent adopters and creators going into the hackathon. These projects shifted them squarely into creators.

The demos at the end surface insights you won’t get any other way. People get a few minutes to show what they built, talk about their challenges, and show off a little. Everyone watching sees how someone else solved a similar problem differently, what problems people chose to work on, and what others think is important enough to spend a day on. With vibecoding, people outside engineering can participate meaningfully, which means technical teams see what problems other departments actually face, and non-technical participants discover that building good software is harder than it looks.

The Diagnostic Value

Training completion tells you who showed up. A real-life exercise, like a hackathon, tells you who your people actually are. From running the latest constraint-based hackathon, I found people fell into three distinct groups:

- Creators. People who took to AI immediately — not just using it, but pushing it, finding its edges, combining it with other tools in unexpected ways. Some I’d already identified as high performers. Others surprised me.

- Latent adopters. People with real creative potential who simply didn’t know AI was this accessible. They’d assumed it was complicated, required specialized knowledge, or wasn’t meant for their kind of work. Once they saw how low the barrier actually was, they were off and running. This group was the biggest win. Training might have eventually reached them, but a hackathon compressed months of gradual adoption into a single day.

- Resisters. People who are going to keep doing things the way they’ve always done them, regardless of what tools are available. They exist in every organization. A hackathon doesn’t convert them, but it identifies them. This is useful information when you’re thinking about where to invest your coaching energy.

You won’t learn any of this from training metrics. Completion rates don’t distinguish between someone who’s genuinely excited and someone who clicked through the modules while checking email.

Sanctioning the Inevitable

Skeptical leaders will rightfully see these success stories and also see the risk. They see non-engineers shipping code and shadow AI projects popping up outside of standard governance. Hackathons don’t eliminate governance, they reveal where governance is mismatched to reality.

And here’s the reality: your Creators are already using these tools. If you haven’t provided a paved path, they’re using personal accounts, unvetted models, and pasting proprietary data into browser extensions. You don’t prevent Shadow AI by banning it; you prevent it by providing a better, safer alternative.

A hackathon serves as a “controlled burn.” By providing a sanctioned environment, enterprise-grade LLM access, clear data privacy boundaries, and a dedicated period, you bring that experimentation into the light. You aren’t just encouraging adoption; you’re directing it into a structure where you can actually see what’s happening. It’s much easier to provide guidance on a tool someone built in a day than it is to hunt down weeks or months worth of “stealth” automation.

The Reframe

Your AI strategy is your adoption strategy. Tool selection, use case identification, and governance frameworks; all of that is downstream of whether people actually use the thing.

Hackathons aren’t a complete solution. Sustained adoption requires ongoing reinforcement — regular opportunities to experiment, regular reminders, visible wins, and leaders who model the behavior. But as a way to kickstart adoption, surface latent potential, and learn who your people actually are, they’re hard to beat.

The technology works. The question is whether your people will. That depends on whether you’re pushing them toward AI or creating conditions where they pull it toward themselves.